Music appreciation has historically been a passive activity. We listen to what is broadcast, streamed, or performed, bounded by the catalogs of major labels and the algorithms of streaming services. For students, educators, and cultural enthusiasts, understanding the nuances of a specific genre—say, the difference between a Brazilian Bossa Nova and a Cuban Son—often requires extensive research or access to specialized libraries. The advent of the AI Music Generator introduces a new dimension to this exploration: active synthesis. Instead of merely searching for an existing track that matches a criteria, users can now construct musical prototypes to test their understanding of genre, rhythm, and instrumentation.

This shift from consumption to creation changes the learning curve. By manipulating parameters like tempo, mood, and style tags, a user can instantly audialise theoretical concepts. If a student wants to hear what a “Baroque fugue played on synthesizers” sounds like, they no longer need a degree in composition and a rack of electronic gear; they simply need to articulate the concept. This capability transforms the platform from a mere production tool into a sandbox for acoustic experimentation, allowing for cross-cultural and cross-temporal musical collisions that might never occur in the physical world.

The Mechanics of Style Transfer and Genre Blending

The core engine driving platforms like ToMusic is built on analyzing vast datasets of audio waveforms and their associated metadata. When a user inputs a complex prompt combining disparately related tags—for example, “Heavy Metal” and “Bluegrass”—the AI does not just overlay two tracks. It attempts to synthesize a new waveform that respects the sonic textures of both. This process, often referred to as style transfer, requires the model (specifically the more advanced V3 and V4 iterations) to understand the structural rules of each genre and find a mathematical compromise.

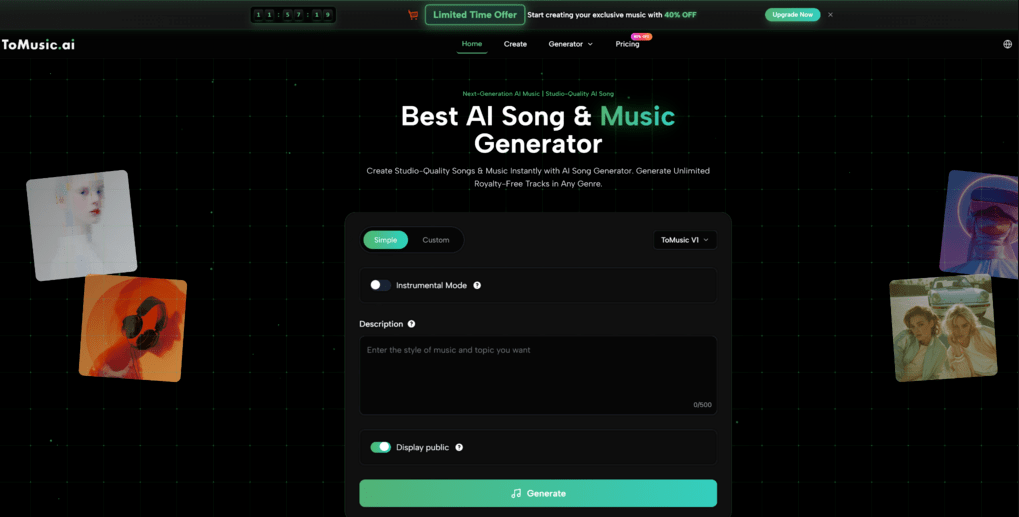

Executing a Structured Musical Experiment

To effectively use this technology for exploration rather than just random generation, one must approach the interface with a hypothesis-driven mindset. The goal is to see how the AI interprets specific cultural or stylistic inputs. Based on the standard workflow of the platform, the following steps yield the most educational insights into musical structure.

Step One Defining the Genre Constraints

In the “Text to Music” panel, the user must look beyond simple emotion tags (like “Sad” or “Happy”). The power lies in specificity. Inputting “Traditional Japanese Koto with 80s Synthwave Bass” forces the AI to reconcile organic, plucked string attacks with sustained, electronic low-end frequencies. This stage tests the model’s training data regarding specific instrument timbres and rhythmic patterns.

Step Two Analyzing the Compositional Output

Once the track is generated, the focus shifts to analysis. The “Lyrics to Music” feature can be particularly revealing here. by inputting a neutral text (like a weather report) and applying different genre styles, one can observe how the prosody—the rhythm of the speech—changes. A “Rap” setting will force the text into strict 4/4 meter with syncopation, while an “Opera” setting will elongate vowels and ignore the natural speech rhythm in favor of melodic contour.

Step Three Isolating Elements for Study

For a deeper dive, utilizing the “Extract Stems” feature allows the user to listen to the drum track or the bass line in isolation. This is invaluable for understanding how a specific genre is constructed. Hearing the drum pattern of a generated “Reggaeton” track in isolation reveals the specific “Dem Bow” rhythm that defines the style, stripping away the distraction of melody and harmony.

Evaluating the Authenticity of AI Instrumentation

A critical question for any enthusiast is the fidelity of the simulation. In my assessment of generated tracks ranging from “Orchestral” to “Lo-Fi,” the results are a mix of impressive accuracy and digital hallucination. The V4 model demonstrates a strong grasp of Western 12-tone music theory and standard pop instrumentation (guitars, drums, pianos). However, when pushed into non-Western microtonal scales or complex polyrhythms found in traditional African or Indian classical music, the AI sometimes “quantizes” the result, smoothing out the intricate irregularities that give the music its human feel.

The Role of Hallucination in Creative Discovery

Interestingly, the “errors” or “hallucinations” made by the AI can be creatively stimulating. When the model attempts to bridge two incompatible genres, it often creates a sound that doesn’t exist in reality—a “glitch” that sounds like a new instrument. For experimental composers, these artifacts are not failures but raw materials. The AI’s attempt to simulate a choir might result in a ghostly, synthetic texture that works perfectly for a horror score, even if it fails to sound like a real human choir.

Contrasting Passive Listening with Active Synthesis

To visualize the educational shift provided by generative audio, we can compare the traditional method of music appreciation with the AI-assisted model.

| Educational Aspect | Traditional Music Appreciation | AI-Assisted Musical Exploration |

| Method of Study | Listening to existing recordings | Generating new examples |

| Genre Accessibility | Limited to available library | Limited only by vocabulary |

| Interaction Type | Passive (Consumer) | Active (Director) |

| Structural Insight | Requires ear training to hear parts | Stem separation makes parts clear |

| Cross-Over Potential | Rare (Mashups are difficult) | Instant (Infinite combinations) |

| Cost of Discovery | High (Buying albums/tickets) | Low (Credit usage) |

| Cultural Accuracy | 100% (Real recordings) | Variable (Approximations) |

The Future of Interactive Musicology

As these models continue to ingest more diverse training data, their ability to represent niche global styles will improve. We are approaching a point where a student in a classroom can type “1920s Delta Blues” and instantly generate a unique, copyright-free example that demonstrates the slide guitar techniques and lyrical themes of that era. The AI Music Generator is thus evolving from a novelty toy into a digital ethnomusicologist, preserving and remixing the mathematical DNA of human culture for a new generation of digital explorers.