For many people, the hardest part of making music is not the idea itself, but knowing how to begin. A melody exists in your head, a mood feels clear, but translating that into an actual track requires tools, structure, and technical confidence. This is where platforms like AI Song Maker start to shift the experience. They do not simplify creativity. They simplify the path toward expression.

In my observation, what feels different is not just speed, but the removal of hesitation. Instead of asking “how do I build this track,” users begin with “what should this feel like.” That change reduces friction. It also changes who feels capable of making music in the first place.

The platform does not attempt to replicate traditional production workflows. Instead, it reorganizes the process around description, interpretation, and iteration. That alone makes it worth understanding as a new category rather than just another tool.

Why 2026 AI Song Maker Tools Focus On Interpretation First

Most music tools historically focused on control. You control instruments, timing, layers, and mixing decisions. AISong shifts that emphasis toward interpretation.

From Input Precision To Intent Clarity

Rather than requiring exact parameters, the system accepts:

- Style descriptions

- Emotional direction

- Genre references

- Optional lyrical content

In practice, this reduces the cognitive load. You do not need to define everything. You need to define enough for the system to interpret.

Why Interpretation Introduces Creative Variability

Because the system interprets rather than executes strict instructions, outputs are not identical even with similar prompts.

This variability is not necessarily a flaw. In early-stage creation, it functions more like exploration:

- Slightly different melodies emerge

- Vocal tones vary across generations

- Structural pacing shifts subtly

This creates a space where discovery becomes part of the workflow.

How AISong Translates Descriptions Into Structured Music

The generation process is not random. It follows a layered transformation from text to audio.

How Prompts Define Direction Without Overconstraint

Short prompts guide:

- Overall tone

- Genre alignment

- Instrumental density

However, they leave room for the system to construct transitions and phrasing.

How Lyrics Anchor Rhythm And Vocal Structure

When lyrics are provided, the system aligns:

- Word pacing with rhythm

- Emotional emphasis with melody

- Section boundaries with lyrical flow

In my testing, AI Song Generator lyric-driven generation tends to produce more cohesive vocal tracks.

How Automatic Structuring Shapes The Listening Experience

Even without explicit instructions, outputs often include:

- Intro sections

- Repeating choruses

- Transitional bridges

This suggests that the system encodes common musical patterns and applies them consistently.

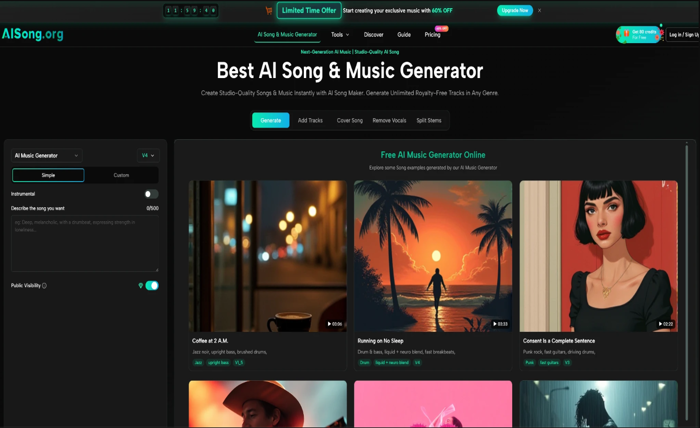

Step Based Creation Flow Reflecting Actual Platform Use

Step 1 Select Generator Entry And Creation Mode

Users begin by choosing between a simplified mode for faster output or a more detailed mode that allows greater input control.

Step 2 Define Song Through Prompt Or Lyrics Input

This step includes entering:

- Concept or title

- Genre and mood

- Optional full lyrics

- Additional stylistic cues

The clarity here directly influences the output.

Step 3 Generate Music Through Model Selection And Credits

After defining inputs, users trigger generation using credits. Different model versions may produce slightly different interpretations.

Step 4 Continue Editing Using Built In Audio Tools

Post-generation tools allow users to:

- Extend tracks

- Separate vocals from instrumentals

- Add missing musical layers

This extends the usefulness beyond a single output.

Comparing AI Song Maker Systems With Conventional Production

| Dimension | AISong System | Conventional Production |

| Starting Point | Idea or description | Composition and arrangement |

| Required Skills | Low to moderate | High technical skill |

| Output Speed | Immediate to minutes | Hours or longer |

| Iteration Model | Regenerate quickly | Re-edit manually |

| Flexibility | High in exploration | High in precision |

The key difference is not capability, but where effort is invested. AISong emphasizes fast iteration, while traditional tools emphasize detailed control.

How This Tool Fits Into Different Creative Workflows

Fast Iteration For Social Content Creators

Short-form creators benefit from generating multiple variations quickly. Instead of refining a single track, they can explore several directions and choose what fits best.

Audio Prototyping For Musicians

Musicians can use generated tracks as starting points. This shortens the gap between idea and rough draft, especially when experimenting with unfamiliar styles.

Narrative Driven Music For Writers

Writers can convert emotional or narrative concepts into sound without needing to learn production tools. This aligns well with lyric-based generation.

Limitations That Still Shape Real World Usage

Dependence On Prompt Quality

The system reflects the clarity of input. Ambiguous prompts often lead to generic outputs.

Iteration Is Still Part Of The Process

While generation is fast, achieving a precise vision often requires multiple attempts.

Detailed Control Remains Limited

Compared to full production environments, fine adjustments such as exact mixing or timing are not deeply customizable.

Why 2026 AI Song Maker Tools Represent A Workflow Shift

The significance of tools like AISong lies less in automation and more in translation.

Instead of building music step by step, users describe intent and let the system construct structure. This redefines where creative effort happens.

In that sense, the tool does not replace traditional production. It repositions it. Creation becomes more accessible, while refinement remains a separate layer.

For many users, that is enough to turn an idea into something real.